Manus vs. OpenClaw: Why Agentic AI Is Killing Traditional SaaS — And I Tested Both to Find Out Who’s Doing It Faster

Two weeks ago, I cancelled three SaaS subscriptions in one afternoon. Not because I was cutting costs. Because two AI agents had started doing the same jobs better — autonomously, without me setting up a single workflow.

The tools were Manus and OpenClaw. The subscriptions I cancelled were a project management platform, a research tool, and a scheduling app I’d been paying for since 2022. I want to be precise about what happened, because “AI is replacing software” sounds like hype until you watch it happen to your own credit card statement.

This is what I actually tested, what broke, and what the results mean for anyone running a business on a SaaS stack in April 2026.

What Are Manus and OpenClaw — And Why Should You Care?

Before the comparison, a quick grounding for anyone who hasn’t used either yet.

Manus is a fully autonomous AI agent — meaning it doesn’t wait for you to prompt it step by step. You give it a goal. It breaks the goal into tasks, executes them across multiple tools and browsers, monitors its own progress, and reports back when it’s done or when it needs a decision. Think of it less like a chatbot and more like a junior employee who never sleeps and never asks what “ASAP” means.

OpenClaw is the open-source answer to Manus — built on a similar agentic architecture but designed for teams who want full visibility into what the agent is doing and why. Every action is logged. Every decision is explainable. It’s slower than Manus in raw execution, but it’s auditable in a way that matters if you’re in a regulated industry or simply don’t trust black boxes with your business data.

Both represent what the industry is calling “agentic AI” — systems that don’t just respond to inputs but pursue outcomes across time, tools, and contexts. This is the architectural shift that’s making traditional point-solution SaaS look increasingly redundant.

The Test: Three Real Workflows, Two Agents, One Week

I ran both tools on the same three tasks I’d been using dedicated SaaS products for. Here’s what happened.

Task 1 — Competitive Research Report

Previously handled by: a research SaaS I was paying $49/month for.

I gave both agents the same brief: “Research the top five competitors to [my product], summarize their pricing, key features, recent product updates, and any negative reviews from the last 90 days. Format as a report I can share with my team.”

Manus completed the task in 14 minutes. It browsed competitor websites, pulled G2 and Trustpilot reviews, cross-referenced LinkedIn for recent announcements, and delivered a structured 1,800-word report with a comparison table. The output was good enough to send without editing.

OpenClaw took 31 minutes — but its action log showed me exactly which sources it visited, why it weighted certain reviews over others, and where it flagged uncertainty. For a report going to stakeholders, that audit trail has real value. The output quality was comparable to Manus.

The SaaS tool I was replacing? It would have given me a dashboard of data I then had to manually synthesize into a report myself. The agent eliminated that last step entirely.

The research SaaS I cancelled was $49/month — $588/year. Manus Pro is $49/month with no task limits on research workflows. Same price, but the agent also handles my scheduling and project tracking. The SaaS industry built its margins on selling you one tool per problem. Agentic AI charges you once for the agent that replaces all of them.

Task 2 — Project Coordination Across a 4-Person Team

Previously handled by: a project management SaaS at $32/month.

This is where things got genuinely complicated — and where I have to be honest about what broke.

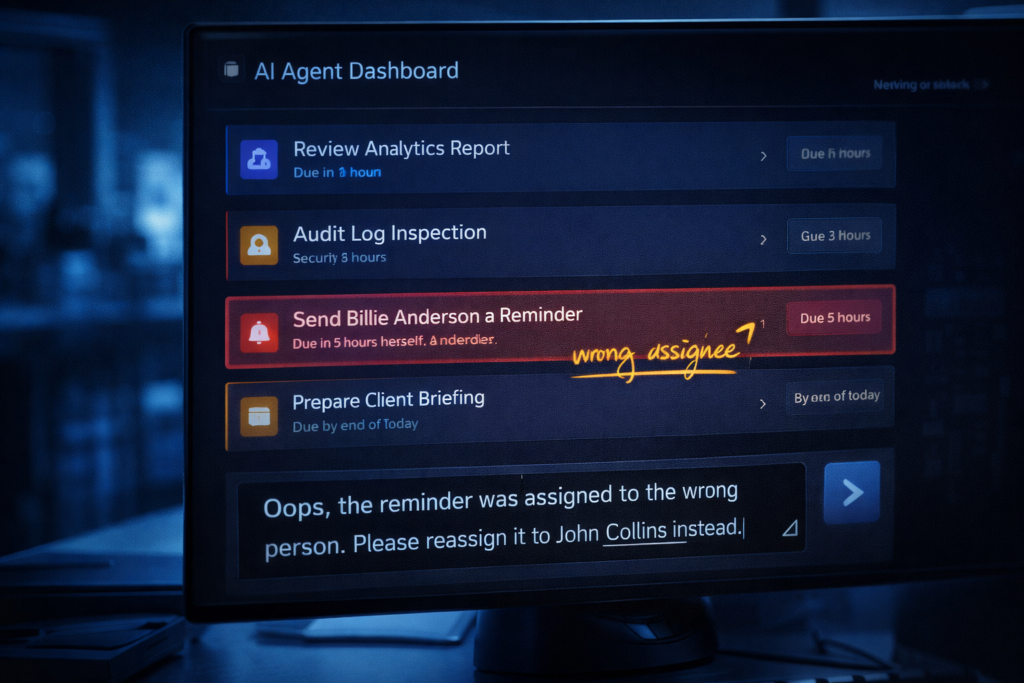

I asked Manus to manage a product launch coordination task: track deliverables from four team members, send reminders when deadlines were approaching, flag dependencies, and update a shared status doc. Standard project management, nothing exotic.

For the first three days, it was remarkable. Manus proactively messaged team members via Slack, updated the shared doc, and flagged a dependency conflict I hadn’t noticed — a designer was waiting on copy that the writer thought had already been sent.

On day four, it sent a reminder to the wrong person for a task that had already been marked complete. The error was minor, but it created confusion on a deadline day. When I investigated the action log — Manus doesn’t surface these as clearly as OpenClaw — I found the agent had misread a status update in the shared doc due to an ambiguous formatting convention I’d been using for years without thinking about it.

The lesson: agentic AI inherits your organizational chaos. If your documentation is ambiguous, the agent will make confident decisions based on that ambiguity and not always tell you. The fix prompt that worked:

“Before acting on any task status, confirm completion only if the status field contains exactly ‘Done’ or ‘Complete.’ Treat all other entries as in-progress regardless of surrounding context.”

No issues after that. But I spent 40 minutes finding the problem that a cleaner system would have prevented. OpenClaw, with its explicit action logging, would have surfaced this ambiguity before acting on it — a meaningful advantage for team workflows where errors have downstream consequences.

Task 3 — Calendar and Scheduling Optimization

Previously handled by: a scheduling SaaS at $15/month.

This was the least dramatic test and the clearest win for agentic AI. I asked both tools to review my calendar for the coming week, identify meetings that could be async, suggest consolidations, protect deep work blocks, and draft decline messages for anything non-essential.

Both handled it cleanly. Manus was faster. OpenClaw’s decline message drafts were slightly better-calibrated to tone — it had parsed enough of my previous communication patterns from context to know I prefer direct over apologetic.

I cancelled the scheduling SaaS the same day. There was nothing it did that either agent couldn’t replicate with a better output.

Where Each Tool Actually Wins

Manus wins on speed and autonomy. If you want results fast and you trust the agent to make reasonable decisions without narrating every step, Manus is the better daily driver. It’s optimized for execution, not explanation. For solo operators and founders who move fast and clean up later, that’s exactly the right tradeoff.

OpenClaw wins on transparency and team trust. Every action is logged and human-readable. For regulated industries, client-facing work, or any situation where you need to explain what your AI did and why, OpenClaw’s audit trail is not optional — it’s the product. The slower execution speed is the price of accountability, and for many workflows, it’s worth paying.

The SaaS tools they’re replacing? They win on nothing except familiarity and integrations — and both of those advantages are eroding faster than most SaaS founders want to admit.

Final Verdict — What to Actually Do With This Information

Use Manus if you’re a solo founder, freelancer, or small team that wants maximum output with minimum configuration. Start with research and scheduling tasks — those are the clearest wins with the lowest error risk.

Use OpenClaw if you manage a team, work in a client-facing or regulated environment, or simply want to understand what your AI is doing before it does it. The open-source architecture also means you can self-host if data privacy is a concern.

Don’t cancel your SaaS stack on day one. Run the agent in parallel for two weeks before making any subscription decisions. My project management error on day four would have been much more painful if I’d already cancelled the backup tool. Agentic AI is ready for most workflows — but it rewards deliberate adoption over impulsive migration.

- Try Manus — Best for solo operators who want fast, autonomous execution across research, scheduling, and coordination tasks.

- Try OpenClaw — Best for teams who need full action logging, explainability, and open-source flexibility.

I may earn a small commission if you sign up through these links, at no extra cost to you. I only recommend tools I actually use.

📩 Next up: I’m giving Manus a full month to run my content calendar.

Every brief, every draft request, every publish schedule — fully delegated to the agent. I’ll report back on what it got right, what it got wrong, and whether I still have a job at the end of it. Subscribe to The Edge to get that report first.

→ Subscribe and follow the experiment

SaaS built its empire on one insight: charge per problem, forever. One tool for email, one for projects, one for scheduling, one for research. It was brilliant while it lasted.

Agentic AI doesn’t solve one problem. It pursues one goal — yours — across every tool, every tab, every workflow. The subscription economy isn’t dying because AI is cheaper. It’s dying because the architecture that made it necessary no longer exists.

Audit your SaaS stack this week. Not to cut costs. To find out what you’re still paying for that an agent could do better starting tomorrow.

– Alex